I was sent this starting base point for a static converter written by however I am new to this and having difficulty implementing it.Ĭould anyone help me by sharing some instructions on where this file should be located, which parts should be edited, etc. So a low tech website is necessarily a static site.” I would therefore like to be able to convert a dynamic Kirby website into static pages. It has a clean and easy-to-use interfaceyou literally paste in the website URL and press Enter. This simple tool copies entire websites, maintains the same structure, and includes all relevant media files too (e.g., images, PDFs, style sheets).

After reading recent topic asking for recommendations about carbon neutral hosting providers, I’m happy to see Kirby’s team trying to do what they can to fight climate change.Īs my good friend and design researcher Gauthier Roussilhe recently wrote in his article Digital guide to low tech, inspired by Low Tech Magazine’s approach on building low tech websites, “A static page is generated once for 1000 requests, a dynamic page is generated 1000 times for 1000 requests. If you're on a Mac, your best option is SiteSucker. Moreover, WebWhacker can duplicate the directory structures of your site and is compatible with Vista, XP and Windows 7.In an effort to reduce my work’s environmental footprint I have recently been trying to incorporate low tech design principles. It is easy to set up and regularly monitors your data for quality, organizes and updates it as per your requirements.

It can copy an entire site to your hard disk for offline users and serves as the training site for tutors and students. This free program works on Windows 7, Vista, XP, Windows Me, and Win98.īlue Squirrel's latest browser is called WebWhacker 5. It is compatible with your ASP, PHP, Cold Fusion and JR languages and can turn them into the static HTML. This tool can also grab files from different sites at the same time, saving a lot of time and energy. It works both offline and online and can create copies of a website multiple times, along with its supporting files such as graphics, pages, sound files, and videos. Grab-a-Site is one of the most powerful and comprehensive web extraction tools. This program requires Mac OS X 10.11 and can be downloaded from the SiteSucker website. You just need to enter the URL in SiteSucker interface, and it will automatically download the desired data. It can easily copy the desired pages, pictures, style sheets and PDFs on your hard drive for offline uses. Sitesucker is mainly a Mac program that helps download website and brings changes to your internet connection. Website eXtractor is compatible with all Windows versions and iPhone devices. It is known for its user-friendly interface and control panel that helps you structure and organizes any data in a better way.

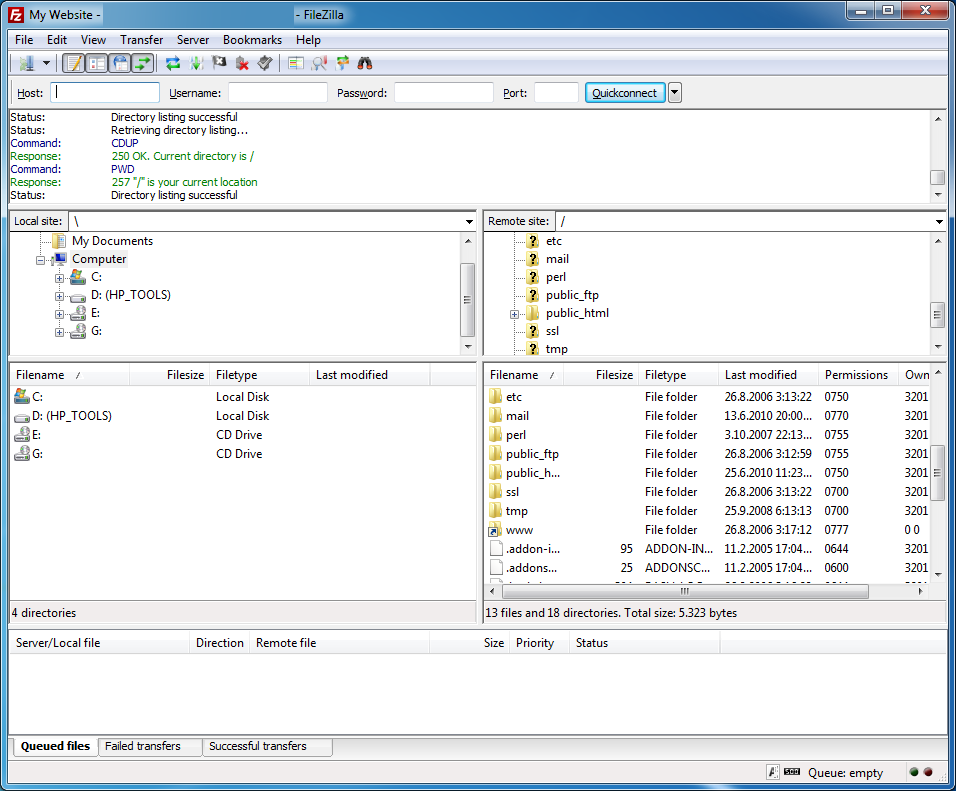

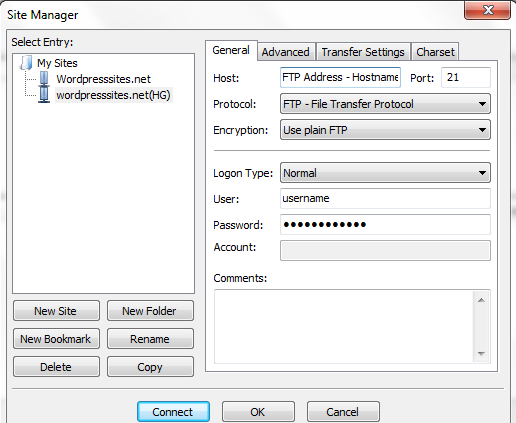

You can download the entire website or parts of it using this powerful tool. Website eXtractor is good for both programmers and non-programmers and makes your online research easy and fast. When the goal is to limit who can perform the file transfer, the log in is often set up to require a username and. FTP clients allow connections from both anonymous and registered users. It is especially helpful as a way to upload or download files to or from a site quickly. The NIFC FTP site is the approved location for posting and retrieving mobile maps. It is one of the coolest and widely famous web extraction programs. FTP is a way to transfer files between hosts over the internet. This tool is compatible with Windows, Vista, XP and other similar operating systems. Also, you don't need an FTP on your website to download the data. With this tool, you can decide how many elements you would like to download, and there is no limit on the number of pages to be extracted. SurfOffline is one of the best and most famous web extractors on the internet. Some of the most reliable tools have been described here. A web extractor is actually a particular program that helps researchers, journalists, students, businessmen, and marketers extract data conveniently and view different web pages with high speed. Thankfully, special web extractor programs make it easy for you to load your favorite sites without any problem. If you are viewing a heavy website or blog with thousands of pages, you would have to click your mouse numerous times to get those pages opened properly. While surfing the internet, you may face the problem of a slow connection and may lose most of the data you need. Whether you have to use data for your business, research or work, there is nothing worse than waiting for pages to load in Netscape Navigator or Internet Explorer.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed